OpenAI is easy to frame as just a model vendor. That undersells what matters now. The more relevant story for operators is that OpenAI is steadily packaging models, tools, workflow design, retrieval, evaluation, and deployment surfaces into something closer to an operational AI stack.

That shift matters because most organizations are no longer asking whether they can call an LLM API. They are asking how to stand up governed internal assistants, workflow agents, document-backed decision support, and repeatable automation without turning every use case into a custom engineering program.

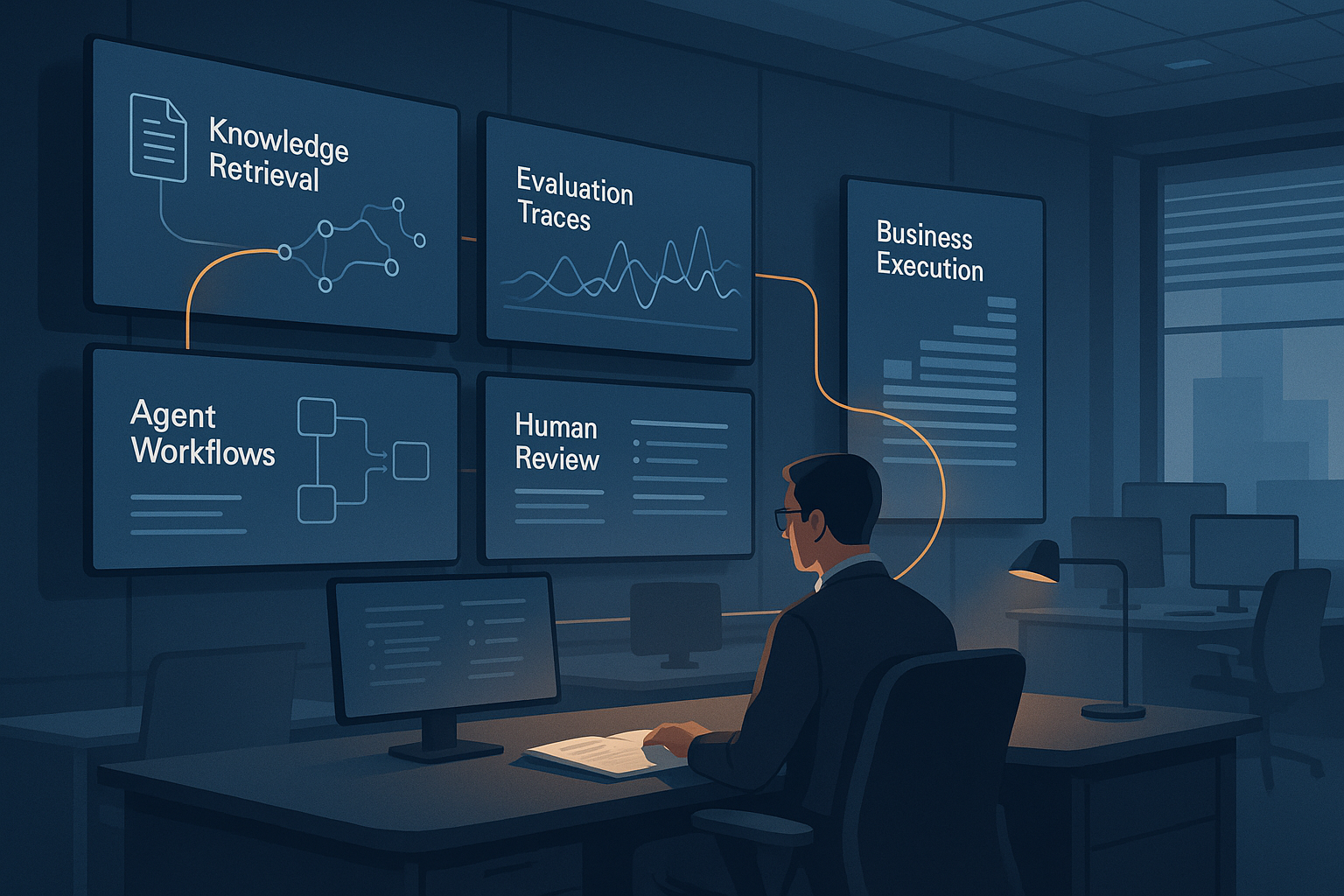

Where OpenAI becomes useful in real operating environments

A useful way to think about OpenAI in 2026 is not “chatbot vendor,” but “workflow substrate” for teams that need AI in live operations.

Consider a mid-market insurer, healthcare operator, or logistics group trying to deploy AI across several layers at once:

- intake and triage of inbound requests

- retrieval against policy documents and operating procedures

- agent workflows that call tools or search the web when needed

- human review for sensitive decisions

- evaluation and trace review before broader rollout

That is the kind of environment where OpenAI’s recent direction becomes more operationally relevant than raw model quality alone.

The March 2026 push around agent-building tools, built-in web search, retrieval, workflow orchestration, Agent Builder, and trace grading points to a provider trying to reduce the distance between experimentation and managed deployment. That is useful for organizations that want fewer moving parts at the start of an implementation.

Why it matters versus alternative approaches

The alternative path is often one of two extremes.

First: buy a narrow SaaS copilot and adapt the business around the product’s limits.

Second: assemble your own stack from separate model APIs, vector tooling, orchestration logic, evaluation systems, and front-end components.

OpenAI is trying to occupy the middle: enough abstraction to accelerate delivery, but enough infrastructure surface to support operational control.

That creates real value in several areas:

- AI adoption readiness: teams can move from pilot to governed workflow faster because retrieval, agent orchestration, and evaluation are closer together

- workflow efficiency: standard tools for web search, retrieval, and agent workflows reduce custom glue code

- governance: trace inspection, evals, admin controls, and enterprise privacy posture make review and rollout more manageable

- decision support: document-backed search and multi-step workflows are a better fit for policy, knowledge, and exception-heavy environments than plain chat alone

- implementation value: smaller teams can stand up useful systems without designing every layer from scratch

What this looks like in practice

The strongest OpenAI use cases are not generic Q&A. They are structured operating patterns.

A support organization can use retrieval over internal documentation to draft answers, then escalate only ambiguous cases to a stronger review path.

A revenue operations team can combine structured prompts, file-backed retrieval, and trace review to build proposal assistants, account-research flows, or contract summarizers that are auditable before they are widely trusted.

A compliance or policy-heavy team can build an agent workflow that retrieves source material, checks for missing evidence, uses web search only when appropriate, and logs the full trace for later grading.

A product or PMO function can run evals against representative tasks before changing prompts or models, which is much healthier than shipping prompt edits on instinct and hoping nothing breaks.

Those are not flashy demos. They are the kinds of systems that actually change throughput, review burden, and implementation confidence.

Where OpenAI fits best

OpenAI is strongest when an organization wants one provider to cover multiple layers of the rollout:

- workforce enablement through ChatGPT Business or Enterprise

- API-based product or workflow development

- retrieval-backed internal knowledge systems

- agentic workflows with tool use

- evaluation and trace-based refinement

It is less compelling if a team already has strong preferences for a deeply modular best-of-breed stack and is comfortable maintaining orchestration, observability, and governance across several vendors.

Bottom line

OpenAI matters because it is increasingly offering more than intelligence at the model layer. It is offering a more complete operating surface for teams trying to make AI usable, governable, and iterative inside real workflows.

That does not remove the need for architecture discipline. But it does reduce the amount of scaffolding an organization has to invent before it can learn what works.

If your team is sorting out where OpenAI fits in a practical operating model—and where custom architecture, governance controls, or phased rollout matter more than speed—Q52 can help. Our Operational Enablement services and the Q52 Diligence Framework are designed to assess AI readiness, implementation risk, governance needs, and operational fit before those choices get expensive.

Sources used for this spotlight: